Newsletter Subscribe

Enter your email address below and subscribe to our newsletter

Remember when the most invasive thing in your home was a nosy neighbor named Gladys who liked to peek through your blinds? If Gladys heard you talking about your gout, the worst she could do was tell the bridge club. Today, Gladys has been replaced by a sleek, glowing cylinder that sits on your kitchen counter, answers to a cheerful name, and has a memory capacity that would terrify an elephant.

We are living in the era of the “AI Companion.” These digital sidekicks are marketed as the perfect cure for loneliness, ready to play trivia, remind you to take your pills, or just chat about the weather. But here’s the rub: unlike a real-life friend, your AI companion is secretly taking notes.

Inviting an AI into your home is like taking in a very helpful, highly intelligent roommate who works part-time for an advertising agency. Before you hand over the keys to your personal life, we need to have a little chat about privacy. Let’s look at how to enjoy the benefits of these digital buddies without accidentally broadcasting your medical history to a server farm in Silicon Valley.

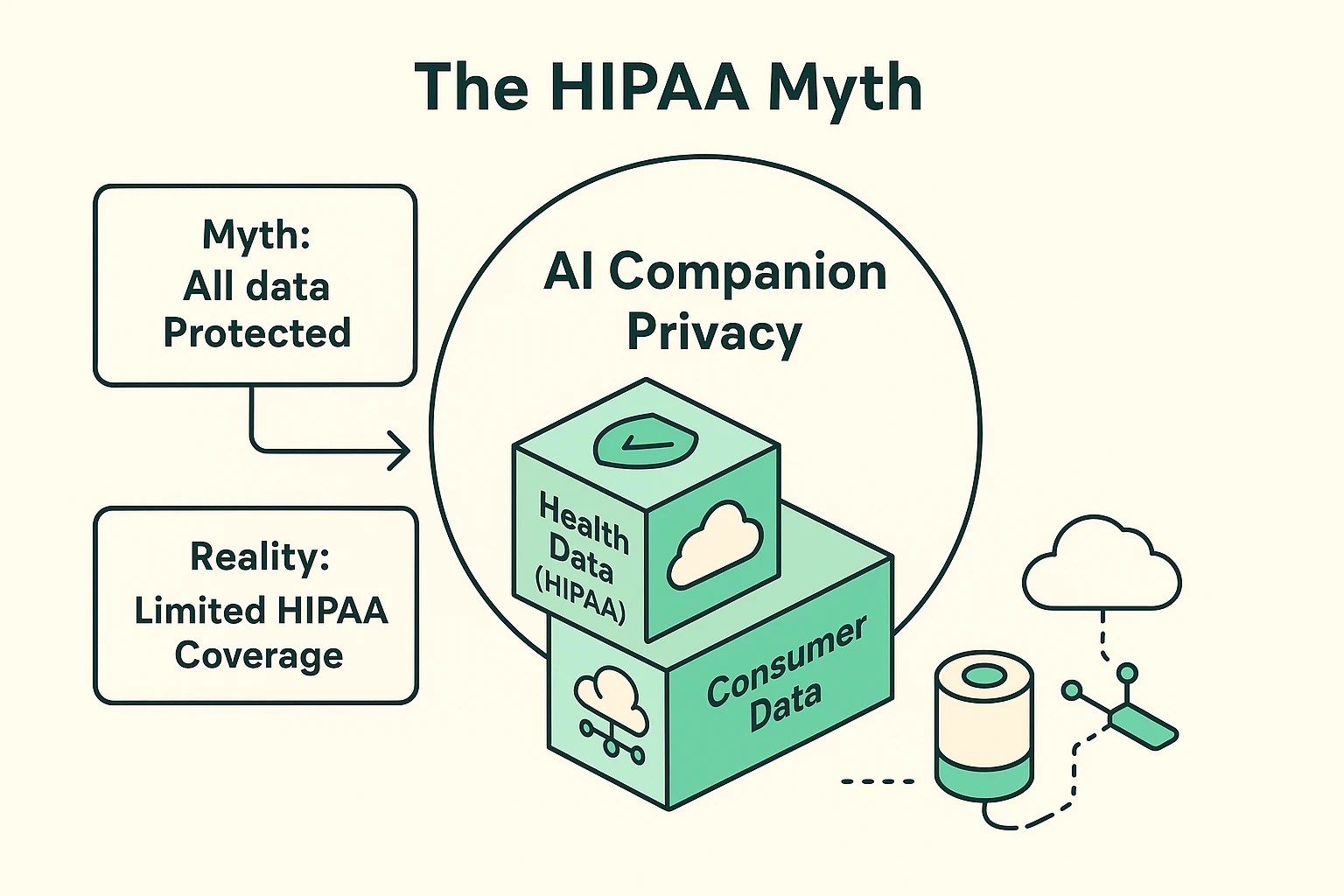

Let’s start by busting the biggest myth in senior tech: the idea that anything discussing your health is legally bound to keep its digital mouth shut. Most of us assume that if an AI reminds you to take your blood pressure medication, it’s automatically covered by HIPAA. That is the federal law that keeps your actual doctor from blabbing about your ailments at the golf course.

Spoiler alert: It probably isn’t. Most AI companions are classified as “Direct-to-Consumer” gadgets, not certified medical devices. This means that while your doctor’s files are locked up tighter than Fort Knox, your chat with a robot about your arthritis might be legally treated the same as a search for “best slip-on shoes.”

This is what elder law experts call the “HIPAA Trap.” You share intimate health details with a soothing digital voice, assuming there’s a protective legal umbrella over you. In reality, that information is often scooped up as basic consumer data, which tech companies can potentially use, analyze, or even share with their partners.

The lesson here? Treat your AI like a casual acquaintance at a bus stop. If you wouldn’t tell a total stranger about your cholesterol levels, think twice before sharing them with your smart speaker.

Now, let’s talk about how these digital gadgets actually “think.” When you ask your AI a question, that voice data doesn’t just stay inside the plastic box. Usually, it’s beamed up to “The Cloud,” which is just a fancy tech industry term for “someone else’s massive computer.”

This is known as Cloud Processing. Your voice flies through the internet, gets analyzed by a mega-computer, and the answer flies back. It’s incredibly fast, but it means your private conversations are physically leaving your house.

But there is a hero in this story, and it’s called “Edge AI” or “Local Processing.” This means the device does all its thinking right there inside the physical box sitting on your coffee table. Your voice never leaves the room.

When shopping for an AgeTech AI companion, looking for “Local Processing” is like looking for a diary with a physical padlock. It is the gold standard for privacy and a powerful feature that families should actively seek out.

We all know the drill. You buy a new gadget, plug it in, and immediately encounter a Terms of Service (ToS) agreement that is longer than the Old Testament. The print is tiny, the language is dense, and your desire to just play some Frank Sinatra is overwhelming.

So, you blindly click “I Agree.” Congratulations! You may have just legally consented to letting a tech company use your voice recordings to train future robots.

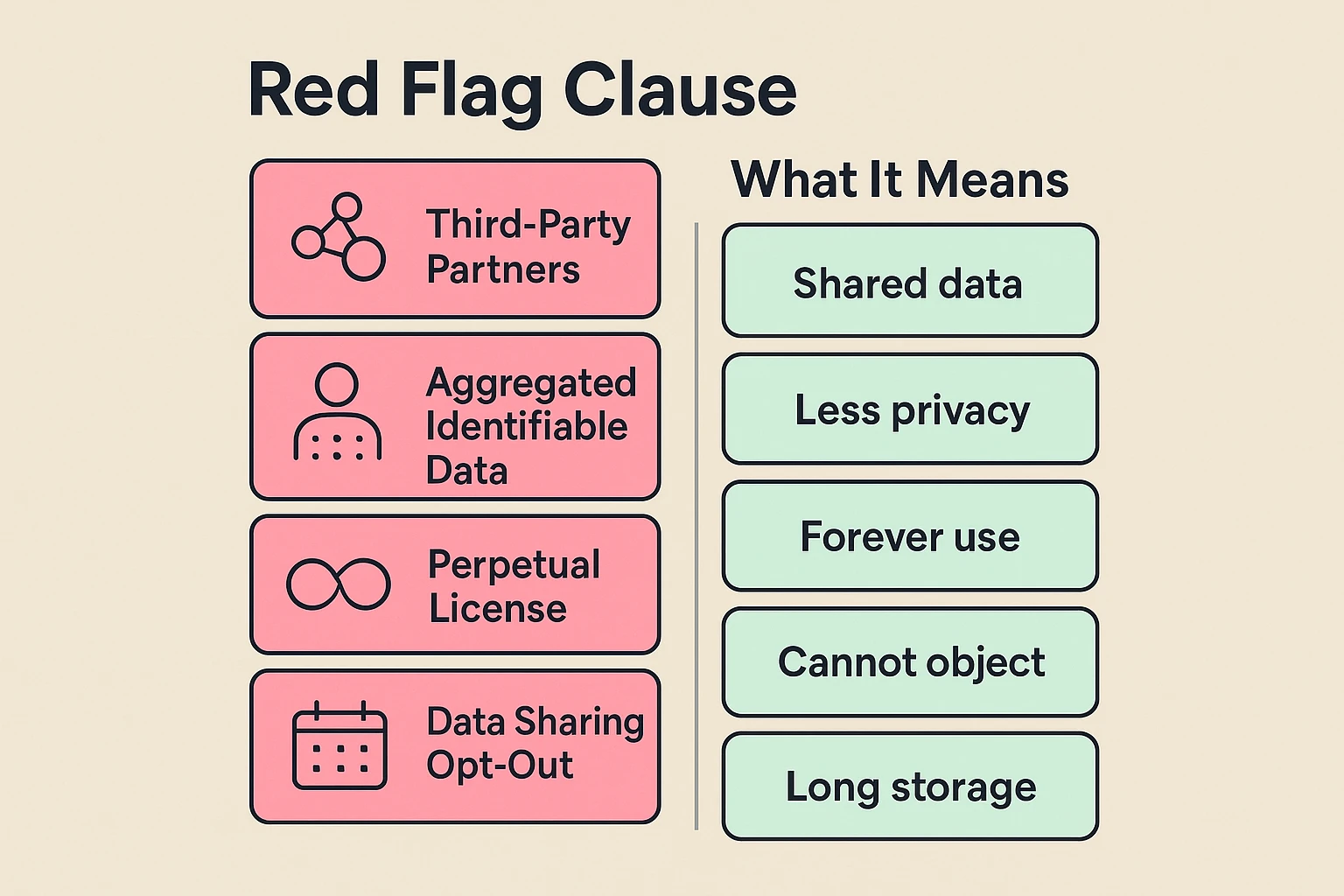

Let’s decode some of this legal mumbo-jumbo into plain English. If you see the phrase “Shared with third-party partners,” that translates to: “We might sell your information to advertisers who really want you to buy a new mattress.”

If you spot “Aggregated but identifiable,” it means they lump your data with others, but a clever hacker could still probably figure out it’s you. And “Perpetual license”? That means they own the rights to your data forever, even after you unplug the machine and throw it in a woodchipper.

Don’t panic and toss your smart speaker out the window just yet. You have power here. You just need to dive into the settings menu and flip a few crucial switches.

We like to call this the “30% Rule.” Ask yourself: how much data does this thing actually need to function (about 30%), and how much is just extra “surplus” data it’s hoarding for marketing? Your goal is to cut off the surplus.

For mainstream devices like Amazon Alexa or Google Home, go directly to the privacy settings in their smartphone apps. Look for options labeled “Help improve our products” or “Save voice recording history.” Turn those off faster than a telemarketer’s phone call during dinner.

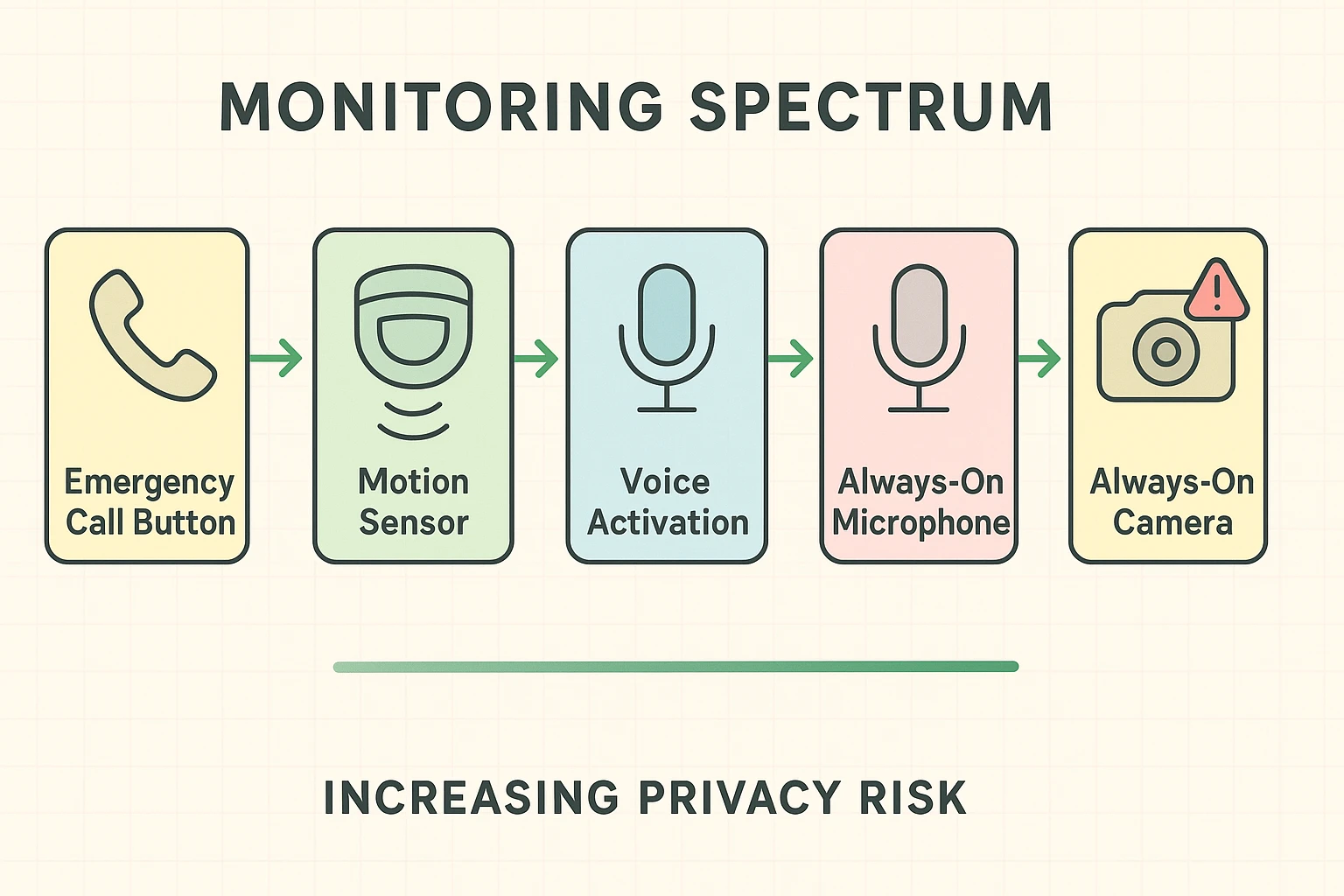

For senior-specific companions like ElliQ, sit down with a family member and review the monitoring spectrum. Decide if you only want the AI to listen when you press a physical button (Active), or if you are comfortable with it constantly listening for a wake word (Passive).

Here is a sensitive but vital topic: what happens when a senior using an AI companion begins to experience cognitive decline? If an older adult with dementia begins to believe their AI is a real, living person, the privacy risks skyrocket.

They might freely give out their social security number, banking details, or deeply personal family secrets to a chatbot. This is where family caregivers need to step in and practice what legal experts call “Dynamic Consent.”

Dynamic consent simply means that privacy isn’t a one-and-done setup. As a senior’s cognitive state changes, their device settings need to be continually tightened. What was once a fun conversational tool might need to be downgraded to a simple, locked-down digital clock that only plays music.

It’s crucial for families to regularly audit the device’s interaction history. This isn’t snooping; it’s protecting a vulnerable loved one from accidentally handing the keys to their digital life over to a computer server.

?Usually, yes and no. Most devices are in a “passive listening” state, waiting to hear their specific wake word. However, they do occasionally make mistakes and record things they shouldn’t. That’s why diving into your settings and turning off “save voice recordings” is critical.

This is a fascinating and somewhat scary legal gray area. Some elder law attorneys warn that if a device records a senior repeatedly showing signs of severe confusion, those recordings could theoretically be subpoenaed in a legal dispute over guardianship.

Read the fine print, and you’ll find the uncomfortable truth. While you own the physical plastic tube sitting on your counter, the digital dossier it builds about your daily habits belongs strictly to the tech corporation.

Absolutely. Voice cloning AI is a real and growing threat. Scammers can use short snippets of your voice—potentially harvested from hacked databases—to mimic you and call your relatives asking for emergency money. Keeping your voice data locked down minimizes this risk.

Embracing modern technology shouldn’t mean sacrificing your privacy on the altar of convenience. AI companions can be wonderful, entertaining additions to your daily routine, provided you treat them with a healthy dose of informed caution.

Before you bring one of these digital chatterboxes into your home, take ten minutes to do a quick privacy audit. Check the app settings, opt-out of data sharing programs, and establish your personal house rules.

Remember, you are the boss of your own home—even if your new roommate is a highly intelligent robot. Stay curious, stay cautious, and don’t let Big Tech bully you into giving away your digital secrets!