Newsletter Subscribe

Enter your email address below and subscribe to our newsletter

Remember when the most ethically questionable piece of technology in your house was the television remote, simply because your spouse refused to share it? Ah, the good old days. You worried about the VCR endlessly flashing 12:00, not whether your “smart” coffee maker was secretly judging your caffeine habits.

Today, the digital landscape is wildly different. We are surrounded by gadgets designed to help us age gracefully, track our health, and stay connected. But navigating these new devices often feels like swimming in a digital ocean surrounded by data-hungry sharks. You just wanted a watch that counts your steps, and suddenly it’s asking for permission to track your location, read your emails, and possibly take a mortgage out in your name.

If you are currently evaluating health tech, medical alerts, or smart home devices for yourself or a loved one, you aren’t just comparing prices. You are stepping right into the ethical frontiers of aging tech. We’re going to break down exactly what you need to know about data governance, algorithmic bias, and digital consent—in plain English, with zero tech-bro jargon.

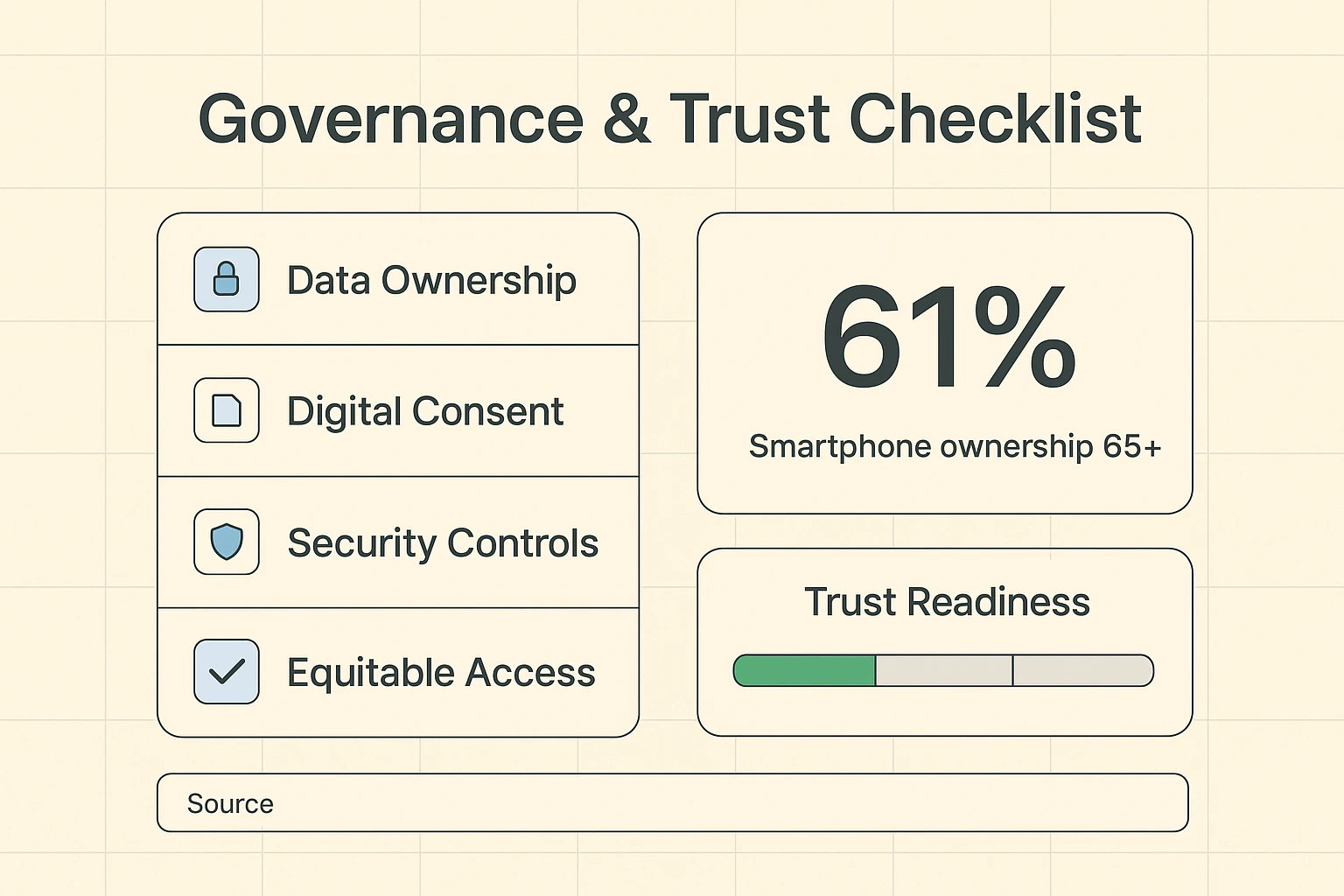

Let’s bust a myth right off the bat: the idea that older adults are terrified of technology is pure fiction. Recent studies show that 61% of adults aged 65 and older own smartphones. Furthermore, telehealth visits among the 70+ crowd skyrocketed from a mere 4.6% pre-pandemic to a robust 21.1%.

Right now, an impressive 64% of surveyed older adults use telehealth, and 36% rely on wearable pendant alarms for safety. Seniors are absolutely adopting technology. The problem isn’t that you can’t learn how to use a tablet; the problem is that the tech industry often designs these tools with all the grace of a bull in a china shop.

Researchers have found that a major barrier to using these tools isn’t a lack of ability, but a lack of trust. In fact, 18% of older adults cite privacy and trust concerns as their primary reason for avoiding digital health tools. And honestly? You have every right to be suspicious.

There is a bizarre phenomenon in Silicon Valley. When engineers decide to build technology for anyone over the age of 55, they suddenly forget how to design sleek, functional devices. Instead, they give us giant, clunky buttons in neon colors, assuming we’ve lost our sense of style along with our landlines.

This medical and societal ageism directly impacts digital inclusion. Technology companies often assume older adults only need basic safety features, completely ignoring the desire for comprehensive health management, fitness tracking, and social connection.

When you are evaluating a technology provider, look closely at how they treat their users. If their marketing talks down to you, or if the device feels like a toy rather than a tool, they probably haven’t put much thought into the serious ethical frameworks behind the scenes, either.

You’ve probably heard of Artificial Intelligence (AI). Tech companies love to talk about AI like it’s a magical wizard living inside your computer. In reality, AI is more like a very fast, very obedient teenager. It only knows what it has been taught, and it lacks common sense.

Here is where algorithmic bias sneaks in. If a tech company trains its AI health software entirely on data from 25-year-old marathon runners, the software is going to get very confused when an 80-year-old uses it. It might flag perfectly normal heart rates for a senior as a medical emergency, or worse, ignore actual warning signs because they don’t match the “young” data.

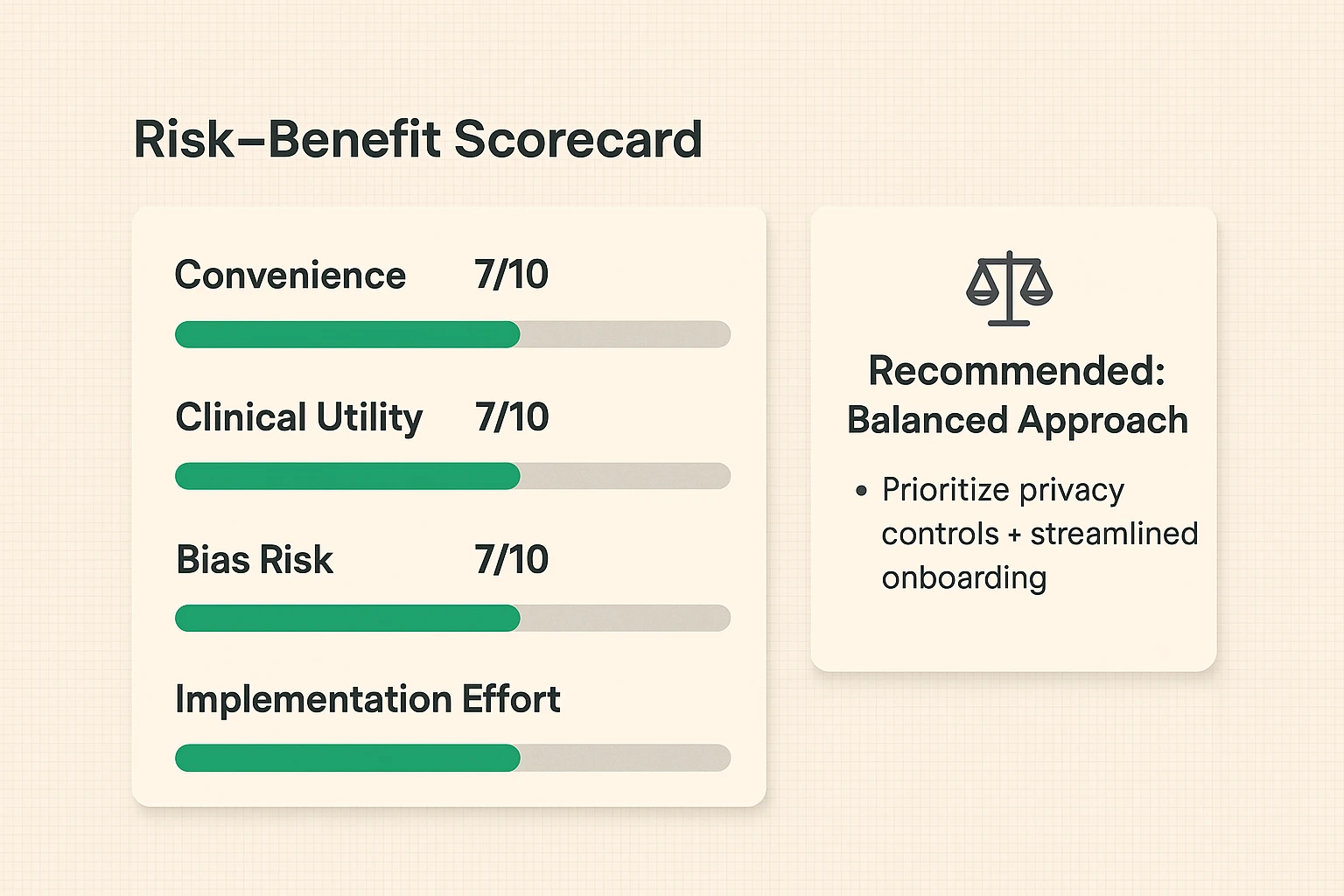

When choosing a digital health platform or a smart monitoring system, you must ask the right questions. Was this technology tested on older adults? Does the company actively work to eliminate ageist bias in their algorithms? If they look at you blankly when you ask, it’s time to take your wallet elsewhere.

We have all been there. You download a new app to track your blood pressure, and before you can use it, you are presented with a “Terms of Service” agreement. It is 47 pages long, written in legal gibberish, and the only option is a tiny “I Agree” button at the bottom.

This is the illusion of digital consent. Tech companies know you aren’t going to read a novel before logging your morning vitals. But by clicking that button, you might legally be allowing them to share your highly sensitive personal health information with third-party advertisers. Your medical history shouldn’t be treated like a bake sale flyer passed around town.

Before you hand over your personal data, always check their website for a plain-English privacy policy. A trustworthy company will explicitly state, “We do not sell your health data.” If they hide behind vague phrases like “we share data with trusted partners to enhance your experience,” that’s corporate speak for “we are making money off your cholesterol levels.”

Another massive ethical frontier is equitable access. Modern aging technology is incredible, but it often comes with a hefty price tag and requires a rock-solid Wi-Fi connection. If life-saving fall detection and telehealth services are only available to those with deep pockets, we have a serious ethical problem on our hands.

Furthermore, we need to talk about data ownership. Who owns the data generated by your pacemaker, your CPAP machine, or your smart pill dispenser? Spoiler alert: it should be you.

When evaluating a service, look for vendors that champion senior digital rights. You should always have the ability to download your own data, delete your history, and easily revoke access to anyone you no longer wish to share it with.

Ultimately, adopting aging technology requires balancing convenience against privacy. An indoor security camera can be a fantastic way for a caregiver to ensure a loved one hasn’t fallen. But it is also a lens pointing directly into someone’s private living space.

Research shows that 100% of the time, social and organizational influences act as the primary enablers for tech adoption among seniors. This means having supportive family members, doctors, and communities helping to set up these tools makes all the difference.

The goal isn’t to be so terrified of data breaches that you throw your smartphone into the nearest lake. The goal is to make informed, empowered decisions. Choose devices that default to the highest privacy settings, require two-factor authentication, and clearly respect your digital boundaries.

Yes, provided the app is HIPAA-compliant (in the US) or meets your local medical privacy laws. Legitimate healthcare providers use secure, encrypted platforms. Just make sure you are using a private Wi-Fi network at home, rather than the free Wi-Fi at your local coffee shop, to discuss your bunions.

Look for transparency. Trustworthy companies publish information about how they test their products. If you are comparing two medical alert systems, check their websites for case studies or clinical trials specifically involving adults over 65.

Ask yourself: does this app actually need to know where I am to do its job? A maps app needs your location. A crossword puzzle app absolutely does not. Always choose “Ask Next Time” or “While Using the App” instead of “Always Allow.”

Yes. Reputable companies provide a clear mechanism in their account settings to permanently delete your data. If they make you jump through flaming hoops to leave their ecosystem, they are not practicing responsible technology development.

You deserve technology that works for you, respects your privacy, and doesn’t treat your age like a medical condition. As you evaluate different digital health tools, remember that you are in the driver’s seat.

Demand transparency, protect your personal information like the treasure it is, and never settle for a device that makes you feel silly. Technology is supposed to be the smart one, after all. Make it work for your lifestyle, safely and securely.