Newsletter Subscribe

Enter your email address below and subscribe to our newsletter

Let’s imagine a world where your mom, who lives a few states away, has a new helper. We’ll call him “CareBot 3000.” This shiny gadget reminds her to take her blood pressure medication, detects if she’s had a fall, and can even order her groceries. On paper, it’s a miracle of modern science, giving you peace of mind and her a new level of independence.

But then you start thinking. If CareBot knows when she gets up, what she eats, and who she calls, where does all that information go? What if it decides, “for her own good,” that she shouldn’t be allowed to have that second cookie and locks the pantry? Suddenly, this helpful robot starts to feel less like a friendly butler and more like a nosy landlord with a key to everything.

This isn’t science fiction. Artificial Intelligence (AI) is rapidly moving into senior care, promising everything from smart pill dispensers to companion robots. While the potential benefits are enormous, the ethical tripwires are just as big. We need to talk about the good, the bad, and the downright awkward side of letting algorithms into our lives.

Before we welcome CareBot 3000 into our homes, we need to understand the ground rules. Think of it like a job interview. Ethicists have boiled down the big concerns into four main categories, which are really just fancy ways of asking some common-sense questions.

This is about the right to make your own choices, even if they aren’t the “optimal” ones. Technology should support independence, not take it away.

Smart devices collect enormous amounts of sensitive health data. The question is, who sees it, and what do they do with it? Your health information is one of your most personal assets.

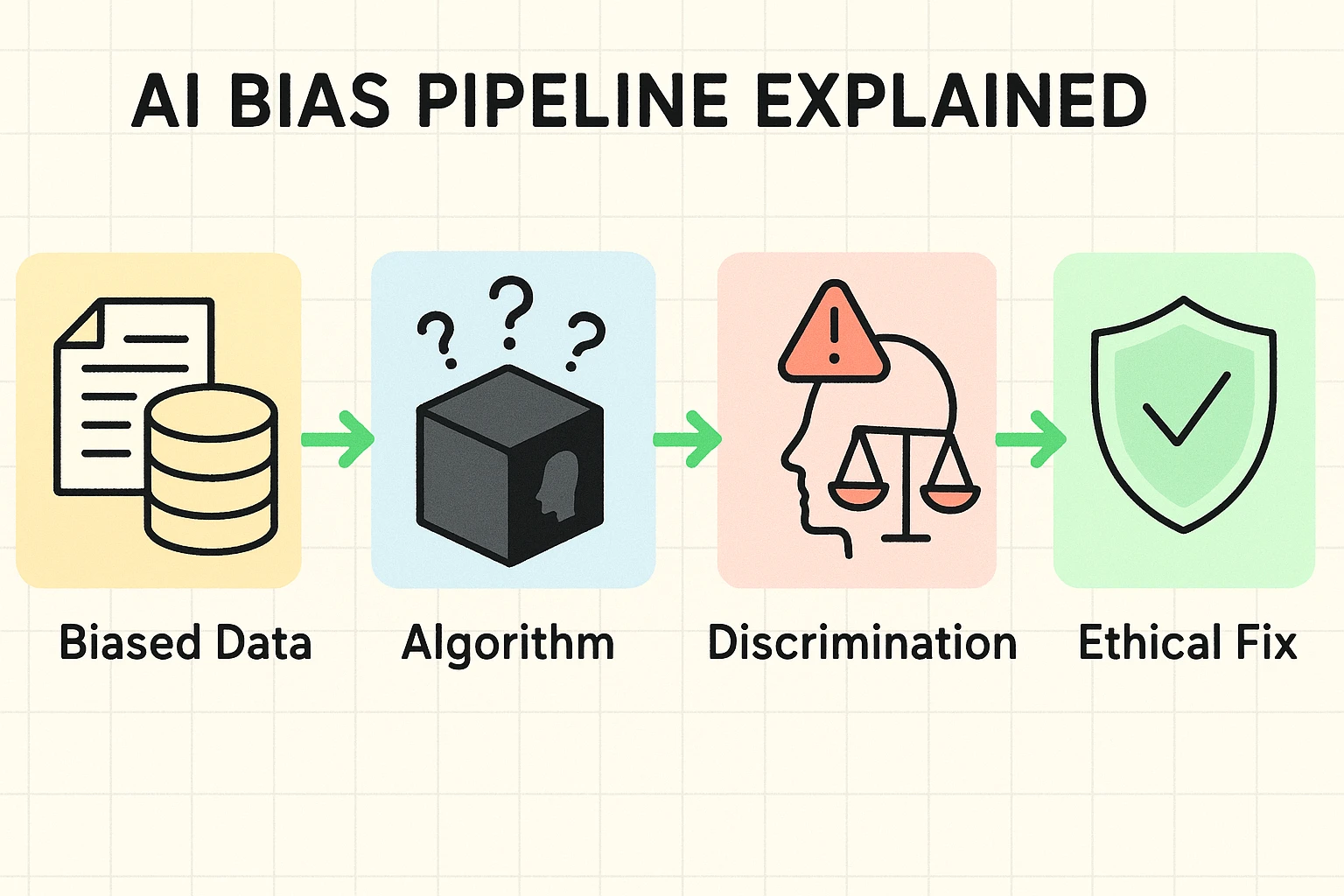

AI systems learn from data. If the data they learn from is skewed, their decisions will be, too. An AI isn’t racist or sexist on its own, but it can become a very efficient amplifier of the biases already present in our society.

If an AI makes a mistake—like misinterpreting a health reading or failing to call for help—who is responsible? The company? The developer? You?

You’ve probably heard people say that computers are objective because they just run on logic and math. That sounds nice, but it’s a dangerous myth. AI systems are more like digital toddlers; they learn everything they know from the information we feed them.

Imagine you tried to teach an AI about nutrition by only showing it pictures of donuts. It would quickly conclude that a healthy diet consists entirely of glazed, sprinkled, and jelly-filled treats. This is the core of algorithmic bias: if the training data is flawed, the AI’s decisions will be flawed, too.

This isn’t just a funny what-if scenario. A few years ago, a major U.S. hospital system utilized an algorithm to identify patients who required additional medical care. The AI didn’t consider the severity of patients’ illnesses; instead, it analyzed their historical healthcare expenditures.

Because of systemic inequities, Black patients at the same level of sickness had spent less money than white patients. The result? The AI systematically flagged healthier white patients for extra care over sicker Black patients. The AI wasn’t designed to be racist, but it learned from a biased world, and it made a discriminatory problem even worse.

As this technology becomes more common, you’ll hear a lot of confusing claims. Let’s bust two of the biggest myths right now.

Not so fast. The Health Insurance Portability and Accountability Act (HIPAA) has been the bedrock of patient privacy for decades. However, it was written long before anyone imagined AI. There’s a significant loophole: HIPAA’s rules often don’t apply to data that has been “de-identified” or “anonymized” (meaning your name and other direct identifiers have been removed).

Tech companies can use huge sets of this de-identified health data to train their AI models without falling under HIPAA’s strict consent rules. While your name might be gone, re-identifying someone from supposedly anonymous data is getting easier every day. This is a crucial gray area you should be aware of.

This is exactly what some companies want you to think. But you don’t need a degree in computer science to ask basic, important questions. If a salesperson can’t explain in simple terms how a device works, what data it collects, and how you can control that data, that’s a major red flag. Complexity should never be used as an excuse to avoid accountability.

Feeling a little overwhelmed? Don’t be. You have the power to make informed choices. The key is to think critically before you bring any new smart technology into your life or the life of a loved one.

Here’s a simple framework to guide you:

The biggest worries are about privacy (who is using your health data?), bias (is the AI fair to everyone?), autonomy (are you still in control of your choices?), and what happens when the AI makes a mistake.

Besides the ethical issues, a major disadvantage is the potential for technology to increase social isolation. If we rely too much on robots for companionship or care, we risk losing the essential human touch that only another person can provide. There’s also the risk of creating a “digital divide,” where those who can’t afford or understand the tech get left behind.

It’s highly unlikely. The best use of AI is as a tool to assist human caregivers, not replace them. AI can handle repetitive tasks like medication reminders or data monitoring, freeing up human hands and hearts for the things machines can’t do—like offering compassion, sharing a story, or holding a hand.

Technology is a powerful tool, but it’s just that—a tool. By asking the right questions and demanding transparency, we can make sure CareBot 3000 works for us, not the other way around. After all, the goal is to use tech to live better, safer, and more independent lives, without having to sacrifice our privacy or our dignity to a smart toaster.